Data Modeling Is Not Optional: Why a Strong Data Model Defines the Success — or Failure — of Any System

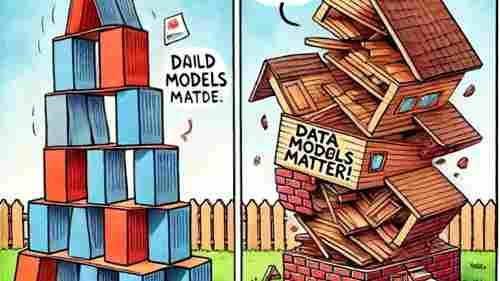

If there’s one mistake I’ve seen repeatedly in my career, it’s this:

Teams rushing to build systems without properly designing the data model.

They focus on APIs.

They focus on UI.

They focus on features.

They focus on deadlines.

And they treat the data model as something secondary.

That is a strategic error.

Because in the long run, systems are not defined by their interface.

They are defined by their data structure.

A Data Model Is the Foundation — Not a Technical Detail

A data model is not just a set of tables and relationships.

It is the formal representation of business reality.

It defines:

- What entities exist

- How they relate

- What rules must be enforced

- What constraints protect integrity

- How history is tracked

- How performance scales

If you misunderstand the business, your model collapses.

If you oversimplify relationships, inconsistencies appear.

If you overcomplicate the structure, performance suffers.

A data model is architecture.

And architecture determines longevity.

The Three Levels — And Why Most Projects Ignore Them

A mature modeling process moves through:

1. Conceptual Model

Business-focused.

Entities and relationships without technical noise.

2. Logical Model

More detailed.

Attributes, cardinalities, constraints.

3. Physical Model

Implementation in SQL Server, Oracle, PostgreSQL, MySQL, etc.

Indexes, partitioning, data types, storage decisions.

The problem?

Many projects jump directly to the physical model.

They start creating tables without deeply understanding business rules.

That shortcut becomes expensive later.

The Real Benefits of a Well-Designed Data Model

Let me be practical.

A strong data model gives you:

1. Performance That Scales

Indexes only help if the structure is correct.

If relationships are poorly defined, no index will save you.

Proper modeling reduces:

- Excessive joins

- Data duplication

- Update anomalies

- Lock contention

Performance begins at design — not tuning.

2. Data Integrity by Design

Primary keys.

Foreign keys.

Unique constraints.

Check constraints.

These are not decorative.

They are business rule enforcement mechanisms.

When teams remove constraints “to improve performance,” they often introduce silent corruption.

A system without enforced integrity becomes unreliable.

And unreliable data kills trust.

3. Maintainability Over Time

A clean model makes evolution possible.

A chaotic model creates fear.

When developers are afraid to touch the schema because they don’t understand it, you already lost architectural clarity.

Good modeling reduces long-term technical debt.

4. Scalability Without Rewriting Everything

Bad modeling decisions don’t hurt immediately.

They hurt when:

- Data grows 10x.

- Reporting requirements expand.

- Integrations increase.

- Regulatory requirements change.

Then suddenly, the original shortcuts become migration projects.

And migrations are expensive.

The Hidden Cost of a Bad Data Model

Let’s talk about consequences.

A poorly designed model causes:

- Slow queries that no index can fix.

- Inconsistent reporting.

- Duplicate records.

- Referential chaos.

- Massive locking issues.

- Complex workarounds in application code.

- Expensive refactoring projects.

- Frustrated developers.

- Unstable BI environments.

And worst of all:

Loss of business credibility.

When executives see inconsistent numbers between reports, the problem is rarely Power BI.

It’s almost always the data model.

Over-Normalization vs Under-Normalization

There is no dogma here.

Extreme normalization can cause:

- Excessive joins

- Performance degradation

- Complex query logic

Extreme denormalization can cause:

- Data redundancy

- Update anomalies

- Integrity problems

The key is balance.

Normalize for integrity.

Denormalize strategically for performance — not by accident.

Architecture is about trade-offs.

Keys Matter More Than People Think

Choosing between:

- Natural keys

- Surrogate keys

- Composite keys

Is not trivial.

Surrogate keys simplify joins and improve flexibility.

Natural keys enforce business reality.

But inconsistency in key strategy creates long-term pain.

Key strategy should be deliberate — not improvised.

Data Types Are Strategic Decisions

Choosing:

- INT vs BIGINT

- DATETIME vs DATETIME2

- NVARCHAR vs VARCHAR

- DECIMAL precision levels

Affects:

- Storage

- Performance

- Index size

- Query execution plans

Small decisions accumulate into large performance differences.

Documentation Is Not Bureaucracy

A well-documented data model:

- Reduces onboarding time.

- Improves cross-team collaboration.

- Prevents knowledge silos.

- Preserves architectural intent.

Undocumented models become tribal knowledge.

And tribal knowledge is fragile.

The Pros of Investing in Strong Data Modeling

- Long-term performance stability

- Lower maintenance costs

- Easier system evolution

- Better BI and analytics reliability

- Stronger governance

- Reduced risk of corruption

- Higher developer productivity

It is strategic infrastructure.

The Cons (Yes, There Are Trade-Offs)

Let’s be realistic.

Proper data modeling requires:

- More time upfront

- Business involvement

- Design reviews

- Iteration cycles

- Skilled architects

In fast-moving startups, teams sometimes choose speed over structure.

That can work temporarily.

But eventually, growth exposes structural weaknesses.

You either invest early — or pay later.

Usually much more.

The Hard Truth

You can rebuild an API.

You can redesign a front-end.

You can refactor services.

But rewriting a broken database schema in a live system?

That is painful.

Data models become rigid because they accumulate production data.

Fixing them later is exponentially harder than designing them correctly from the beginning.

My Personal View After Years in the Field

The systems that aged well had strong data modeling foundations.

The systems that required constant firefighting usually had:

- Poor normalization decisions

- Missing constraints

- No conceptual modeling phase

- Performance-first shortcuts

- Inconsistent key definitions

Data modeling is not glamorous.

It doesn’t get applause.

But it defines the durability of everything built on top of it.

Final Thoughts

If you are building a system:

Slow down before creating tables.

Understand the business.

Model the relationships.

Define the constraints.

Anticipate growth.

Think about concurrency.

Think about reporting.

Think about future integrations.

Because at the end of the day:

A database is not just storage.

It is the structural memory of the organization.

And memory must be designed carefully.

🚀 Ready to boost your career in data?

👉 DBAcademy – DBA & Data Analyst Training

Over 1,300 lessons and 412 hours of exclusive content.

Includes subtitles in English, Spanish, and French.

🔗 https://filiado.wixsite.com/dbacademy

💡 Start learning today and become a highly in-demand data professional.

Share this content:

Post Comment