Patroni, Keepalived, and HAProxy — When and Why Should You Use Each?

One of the most common questions that arises when designing a PostgreSQL high-availability cluster is:

“If Keepalived already performs IP failover via VRRP and I can check Patroni’s REST API in a health script, why would I still need HAProxy?”

This question came from a student building a 3-node PostgreSQL cluster using Patroni and etcd. Since their goal is simply to ensure read/write access to the current leader, they questioned whether HAProxy is truly necessary — or if a VIP managed by Keepalived would be enough.

Let’s break this down clearly.

The Student’s Question

“If I script a REST API check against Patroni and perform TCP checks in Keepalived, I can get good HA with just three nodes. If I only care about read/write access to the leader, why not just use Keepalived with a VIP pointing directly to PostgreSQL? Isn’t adding HAProxy overkill?”

It’s a fair question.

The Short Answer

Yes — for small environments, a 3-node cluster using:

- Patroni

- etcd

- Keepalived (with proper health checks)

can absolutely provide solid high availability.

However, the architecture taught in the course is designed for production-grade, mission-critical environments, not only for basic availability.

And that distinction is important.

When Keepalived Alone Can Be Enough

If you:

- Have a small workload

- Can tolerate brief connection errors during failover

- Do not need read scaling

- Do not need advanced routing

- Are managing a non-critical system (for example, a monitoring database like Zabbix)

Then using only Keepalived with a well-written health check script can be perfectly reasonable.

In that setup:

Application

↓

VIP (Keepalived)

↓

Current Patroni LeaderSimple. Clean. Functional.

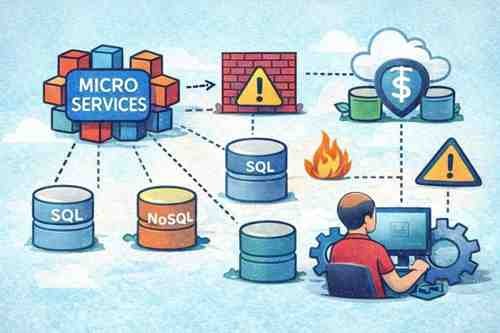

Why Production Architectures Add HAProxy

When someone decides to build a 3-node PostgreSQL cluster, it usually means:

- The application is important

- Downtime has business impact

- Data integrity matters

- High availability is a requirement

In that context, the incremental cost of adding proper load balancing and separation of concerns is typically very small compared to the operational risk it mitigates.

Here’s why HAProxy is commonly added.

1️⃣ Separation of Responsibilities

Keepalived handles:

- IP failover (Layer 2/3)

- VRRP election

- Moving a virtual IP between nodes

It does not:

- Understand PostgreSQL roles

- Manage connection routing

- Handle retries

- Perform advanced health logic

HAProxy handles:

- Leader detection via Patroni REST API

- Smart routing

- Avoiding traffic to replicas

- Connection handling and retries

This clean separation increases reliability.

2️⃣ Cleaner Failover Behavior

During a failover:

- Old leader fails

- Patroni promotes a new leader

- Clients reconnect

Without HAProxy, there can be short windows where:

- Clients hit a node that is no longer leader

- PostgreSQL is running but in read-only mode

- Applications receive “read-only transaction” errors

HAProxy reduces these windows by dynamically routing only to the confirmed leader.

3️⃣ Scalability and Future Growth

Even if today you only need write access to the leader, tomorrow you might need:

- Read replicas for scaling

- Read/write split

- Connection limits and pooling

- Advanced traffic control

If HAProxy is already part of the architecture, scaling becomes much easier without redesign.

4️⃣ Fault Isolation

In more robust designs:

Application

↓

VIP (Keepalived)

↓

HAProxy (Primary + Backup)

↓

Patroni / PostgreSQL ClusterKeepalived provides HA for HAProxy. HAProxy provides smart routing for PostgreSQL.

Each layer has a clear responsibility.

This design prevents tight coupling between IP failover logic and database role awareness.

“But Isn’t 8 VMs Overkill?”

You’ll often see reference architectures with:

- 3 PostgreSQL nodes

- 3 etcd nodes

- 2 HAProxy/Keepalived nodes

Yes — that’s 8 VMs.

For small environments, that can absolutely be excessive.

But for enterprise production systems, this design provides:

- Predictable failover behavior

- Proper quorum handling

- Network isolation

- Reduced blast radius

- Operational stability

The course focuses on these production-ready patterns because they are safer, cleaner, and scale better over time.

The Core Philosophy

Architecture should match business requirements.

If this is:

- A lab

- A small internal tool

- A non-critical service

→ A simplified 3-node setup is perfectly valid.

If this is:

- A revenue-generating system

- A mission-critical database

- An environment with strict SLAs

→ Using HAProxy alongside Keepalived is not overkill — it is responsible engineering.

When someone is already investing in a 3-node PostgreSQL cluster, it is usually because the system matters. In that case, implementing the most robust pattern available typically does not represent significant additional cost, but significantly reduces operational risk.

Final Takeaway

Yes — you can build high availability with only Patroni + etcd + Keepalived.

But the method taught in the course is aimed at robust production environments, where:

- Failovers must be clean

- Routing must be intelligent

- Risk must be minimized

- Scaling must be possible without redesign

Both approaches are valid.

The difference is not about complexity.

It’s about the level of risk you are willing to accept.

Want to learn how to set up a Cluster with Patroni at a great price?

https://www.udemy.com/course/postgres-cluster/?couponCode=A8384B9536F7FEBE5AF8🚀 Ready to boost your career in data?

👉 DBAcademy – DBA & Data Analyst Training

Over 1,300 lessons and 412 hours of exclusive content.

Includes subtitles in English, Spanish, and French.

🔗 https://filiado.wixsite.com/dbacademy

💡 Start learning today and become a highly in-demand data professional.

Share this content:

Post Comment